TL;DR

Start with Triton for 90% of LLM kernel optimization work — development velocity, cross-architecture portability, operation fusion, and built-in autotuning. Reach for CUDA when profiling shows you've hit Triton's ceiling: maximum hardware utilization on specific operations, non-standard memory hierarchies, sparse computation, or warp-level primitives. OneInfer's Kernel Forge defaults to Triton and falls back to CUDA only where measurement justifies it.

What Each Language Actually Is

CUDA is NVIDIA's parallel computing platform extended from C/C++. You manage thread blocks, warps, shared memory, and memory access coalescing explicitly. Complete control over GPU hardware — and complete responsibility.

Triton is an open-source DSL and compiler from OpenAI that compiles Python-syntax kernel code into efficient GPU machine code. It abstracts thread-level parallelism while exposing tiling, memory access patterns, and fusion — the performance-critical decisions high-level frameworks don't let you touch.

Both run on NVIDIA. Triton has growing AMD support. Both can achieve state-of-the-art performance on the right workloads.

Where Triton Wins

Development velocity. A kernel that takes a senior CUDA engineer 3 days takes 4–8 hours in Triton. Velocity advantage compounds across iteration cycles.

Cross-architecture portability. Triton's compiler targets abstract hardware capabilities. A100-tuned kernels compile correctly and perform well on H100. CUDA kernels often need meaningful retuning per generation.

Operation fusion. Triton makes it natural to fuse multiple operations into one kernel pass — load once, transform, write once. The FlashAttention Triton implementation is the canonical reference.

Built-in autotuning. @triton.autotune lets you define a search space of tile sizes, warp counts, and pipeline stages, then automatically benchmarks. Equivalent CUDA autotuning requires building the harness yourself.

Where CUDA Wins

Maximum hardware utilization on specific operations. Warp-level primitives, async memory pipelines, tensor core programming, fine-grained shared memory — CUDA exposes them in ways Triton abstracts away.

Non-standard memory hierarchies. Operations benefiting from explicit L2 cache management, constant memory, or texture memory require CUDA.

Sparse and irregular computation. Sparse matrix operations exploiting specific sparsity patterns are more naturally expressed in CUDA.

Profiling depth.NVIDIA Nsight Compute provides warp-level stall analysis and precise cache miss attribution. Triton profiling is improving but not yet equivalent.

The Decision Framework

Start with Triton if…

- 1Implementing attention variants, normalization, activations, or element-wise ops

- 2Strong Python skills, limited CUDA experience

- 3Need portability across A100, H100, AMD without separate codebases

- 4Target is "90%+ of theoretical max" with fast iteration

Start with CUDA if…

- 1Profiling identifies a bottleneck Triton's abstractions prevent fixing

- 2Sparse or highly irregular computation

- 3Need NVIDIA-specific hardware features Triton doesn't expose

- 4Target is "99% of theoretical max" with CUDA expertise on the team

OneInfer's Kernel Forge Approach

At OneInfer, we built Kernel Forge around Triton — not because it always achieves max theoretical performance, but because the combination of velocity, portability, and autotuning makes it the right default for the vast majority of LLM inference optimization.

Our autonomous agents generate custom Triton kernels tailored to your model architecture and GPU SKU. The system benchmarks configurations automatically and fuses operations across the model's computation graph to minimize memory bandwidth.

For operations where Triton genuinely limits performance — specific sparse attention, non-standard quantization — we implement in CUDA. But these are exceptions. Result: kernels typically within 5–10% of hand-optimized CUDA performance with dramatically faster development. Our attention kernel optimization achieves 12x throughput improvement — 145ms → 12ms per forward pass.

What to Benchmark Before Deciding

- 1TFLOP/s achieved vs theoretical peak. H100 has ~2,000 TFLOP/s FP16. What fraction are you achieving? Tells you compute- vs memory-bound.

- 2Memory bandwidth utilization. Most LLM ops are memory-bandwidth-bound at inference batch sizes.

- 3Kernel launch overhead. For small batch sizes, launch overhead can dominate.

- 4Warp occupancy. Higher occupancy means better latency hiding. NVIDIA's occupancy calculator helps.

Start with Triton. It will get you to excellent inference performance in less time. Reach for CUDA when profiling shows you've hit its ceiling — not before. Learn more about OneInfer's Kernel Forge.

Run multimodal AI inference at production scale

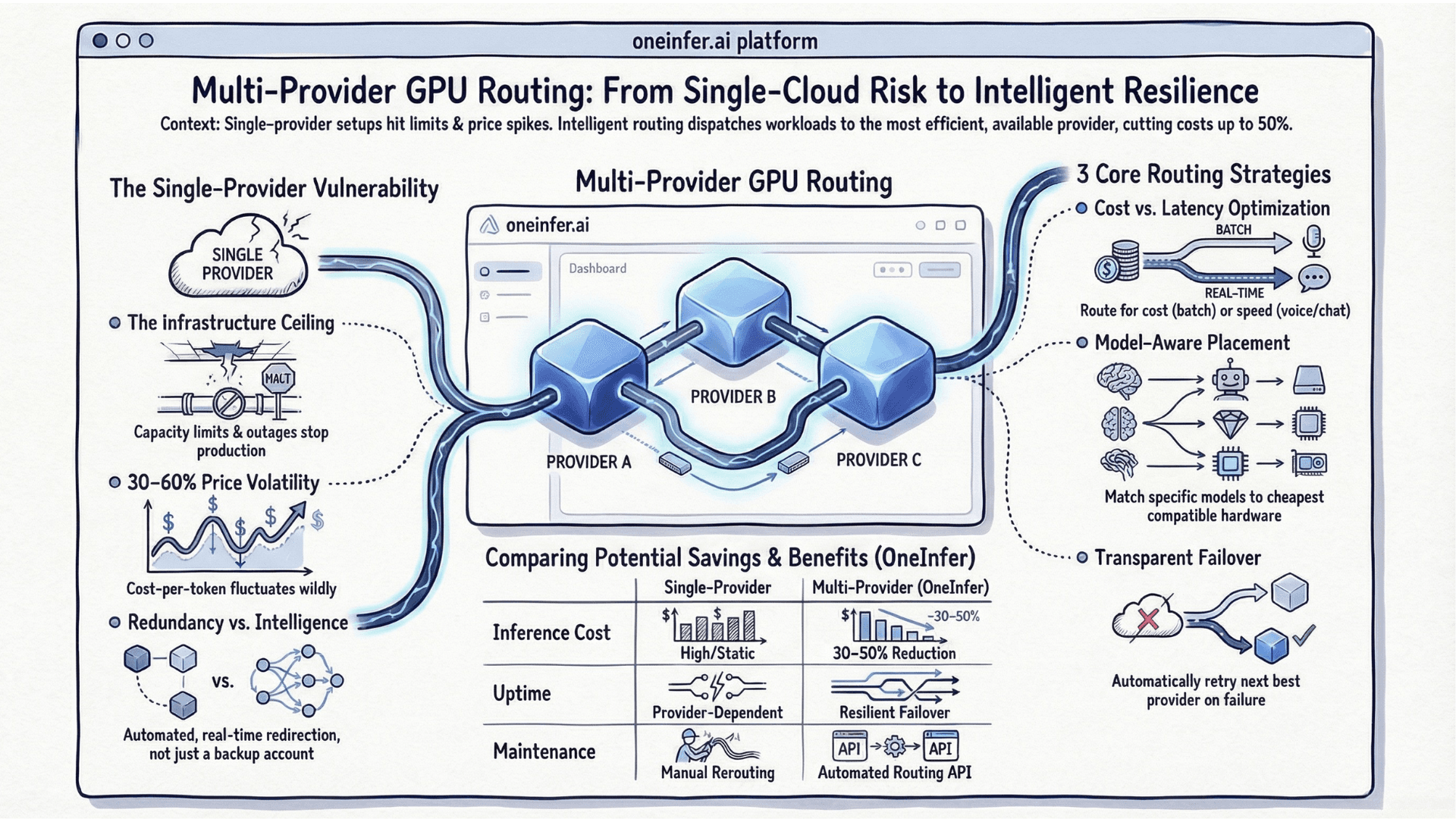

OneInfer routes every request to the optimal GPU across multiple cloud providers in real time, with sub-500ms latency, AI-generated kernel optimization, and transparent pricing.

Frequently asked questions

+Should I write GPU kernels in Triton or CUDA in 2026?

Start with Triton for 90% of LLM kernel work. It delivers 90–95% of theoretical performance at a fraction of the development time. Reach for CUDA only when profiling proves Triton's abstractions are blocking you.

+Is Triton faster than CUDA?

Triton typically achieves 90–95% of hand-tuned CUDA performance on standard LLM operations. CUDA can extract the last few percentage points on specific operations through warp-level primitives and explicit memory hierarchy control.

+Does Triton run on AMD GPUs?

Yes. Triton has growing AMD GPU support, which makes it more portable than CUDA across hardware vendors. CUDA is NVIDIA-only.

+What is operation fusion in GPU kernels?

Operation fusion merges sequences of GPU operations — like layer norm followed by linear projection — into a single kernel pass, eliminating memory roundtrips between operations. FlashAttention is the canonical example.

+How does OneInfer's Kernel Forge use Triton?

Kernel Forge generates custom Triton kernels tailored to each model architecture and GPU SKU using autonomous agents, autotunes tile sizes and pipeline depths, and fuses operations across the computation graph. It falls back to CUDA only when measurement justifies it.