TL;DR

Enterprise-grade AI inference means four verifiable things: (1) security architecture satisfying SOC 2 Type II, HIPAA BAA, ISO 27001, with data isolation, end-to-end encryption, and adversarial input detection; (2) reliability with 99.9%+ uptime SLAs, multi-region active-active, zero-downtime model updates, and circuit breakers; (3) scalability with predictive autoscaling and contractual burst capacity; (4) observability with request-level audit logging, model version governance, and business-unit cost attribution.

What "Enterprise-Grade" Means in Practice

The term "enterprise-grade" is applied so broadly in AI infrastructure marketing it's nearly meaningless. Every platform claims it. Almost no documentation specifies it.

For this guide, enterprise-grade inference means four specific, verifiable things:

- 1Security architecture satisfying compliance requirements of regulated industries — healthcare, financial services, government — not just general commercial.

- 2Reliability architecture delivering 99.9%+ uptime SLAs with contractual consequences, backed by multi-region redundancy and automated failover.

- 3Scalability architecture handling 10x traffic spikes without degraded performance or manual intervention.

- 4Observability architecture providing audit trail depth required for compliance reporting, incident investigation, and model governance.

Platforms meeting all four are a small subset of top inference platforms in 2026.

Security Architecture for Enterprise AI

Data isolation is the first requirement. In multi-tenant inference, your model weights, requests, cached results, and output logs must be completely isolated from other tenants at compute, storage, and network layers. Shared GPU memory between tenants without cryptographic isolation is a security boundary failure regulated enterprises cannot accept.

Encryption at every layer is non-negotiable. Model weights at rest with customer-managed keys. All requests/responses in transit with TLS 1.3. Inference logs at rest with configurable retention and automatic purging. AWS KMS and equivalents enable customer-managed key architectures.

Adversarial input detection is emerging as AI systems become targets for prompt injection, jailbreak, data extraction. Production enterprise inference should include input validation layers detecting and blocking known patterns before reaching the model, with logging.

Compliance certifications translate architecture into verifiable credentials. SOC 2 Type II verifies controls operate effectively over time. HIPAA BAA required for healthcare. ISO 27001 required globally for enterprise procurement.

OneInfer's enterprise architecture is built around SOC 2 Type II with end-to-end encryption, dedicated tenant infrastructure options, and configurable retention. Contact the team for compliance architecture review.

Reliability Architecture for Enterprise SLAs

Enterprise reliability starts where startup aspirations end. 99.9% sounds similar to 99.5% — they differ by 0.4 points. But 99.9% is 8.7 hours/year of downtime. 99.5% is 43.8 hours. For an enterprise across customer-facing applications, that gap is the difference between a manageable incident and a regulatory filing.

Multi-region deployment is the foundation. Active-active across availability zones ensures single-zone failures trigger automatic failover. Globally distributed enterprise also addresses data residency for GDPR and equivalents.

Zero-downtime model updates for enterprises where improvements can't deploy during maintenance windows. Blue-green deployment — new model alongside current, validate with shadow traffic, shift production incrementally — is the standard.

Circuit breakers and graceful degradation prevent cascading failures. When one provider or backend begins failing, circuit breakers automatically stop routing traffic to the failing component.

Contractual SLA commitments with defined remedies distinguish enterprise platforms from aspiration SLAs. If violation results only in service credits equivalent to hours of compute cost, the economic incentive to maintain SLA is weak.

Scalability Architecture for Enterprise Traffic Patterns

Enterprise traffic differs from startup traffic. Predictable periodic spikes — Monday morning reports, end-of-month financials, product launches — alongside unpredictable demand from external events.

Predictive autoscaling uses historical patterns and known business calendar events to provision capacity ahead of demand rather than reacting after it arrives. An enterprise financial app generating reports the first business day of every month should have additional capacity warm thirty minutes before.

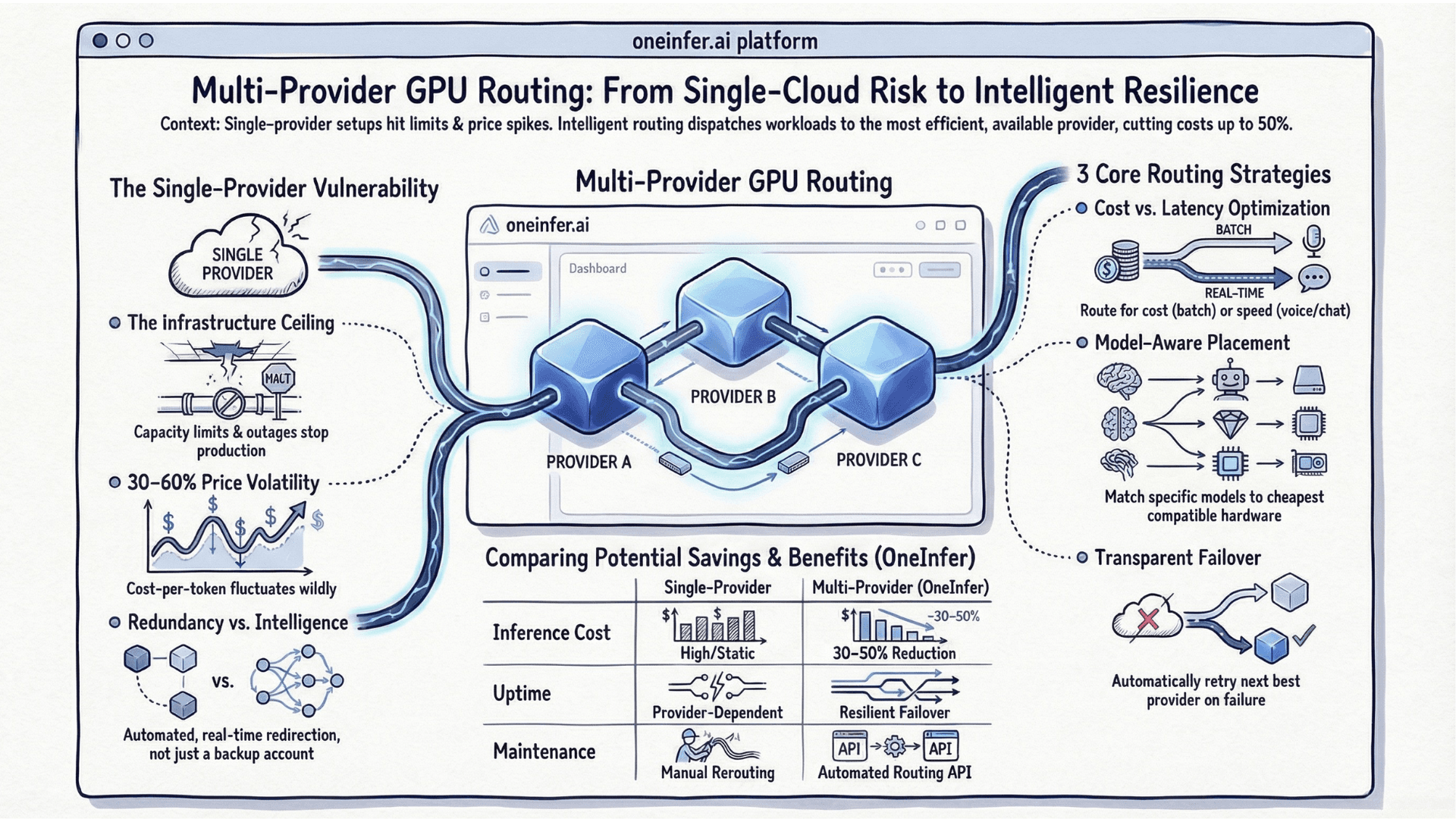

Burst capacity guarantees ensure spikes beyond baseline absorb without degradation. OneInfer's multi-provider architecture provides burst capacity by distributing traffic across multiple GPU clouds simultaneously. When any single provider hits capacity, traffic routes automatically to available capacity on others.

Observability for Compliance and Governance

Enterprise AI observability goes beyond latency dashboards. Regulated industries require audit trails demonstrating how AI systems behave, what decisions they influence, and how behaviors change.

Request-level audit logging — full inference context, model version, input/output hashes, latency, GPU node — for compliance investigations and governance reviews. Tamper-evident, time-stamped with cryptographic precision, retained per regulatory requirements.

Model version governance — which version deployed when, who approved, what evaluation criteria met before promotion — is the foundation enterprise risk teams require.

Cost attribution at business unit level — allocating costs to products, teams, cost centers — for budgeting and chargeback. Without granularity, AI infrastructure becomes unallocated shared cost obscuring true economics.

OneInfer's unified observability provides signal depth required for enterprise monitoring, with per-provider tracking, attribution, and audit-ready logging.

Enterprise AI is a different operational discipline with different requirements, failure consequences, and evaluation criteria than startup AI. The top inference platforms in 2026 serving enterprise reliably are those built with security, reliability, and governance as first-class design requirements. Visit oneinfer.ai or contact the team.

Run multimodal AI inference at production scale

OneInfer routes every request to the optimal GPU across multiple cloud providers in real time, with sub-500ms latency, AI-generated kernel optimization, and transparent pricing.

Frequently asked questions

+What does enterprise-grade AI inference actually mean?

Four verifiable things: SOC 2 Type II / HIPAA BAA / ISO 27001 compliance, 99.9%+ contractual uptime SLAs with multi-region failover, predictive autoscaling with burst capacity guarantees, and audit-grade observability covering request logging and model version governance.

+What's the difference between 99.9% and 99.5% uptime SLA?

99.9% allows 8.7 hours of downtime per year. 99.5% allows 43.8 hours — a 5x difference. For enterprise customer-facing AI applications, that's the gap between a manageable incident and a regulatory filing.

+Is OneInfer SOC 2 compliant?

OneInfer's enterprise security architecture is built around SOC 2 Type II compliance with end-to-end encryption, dedicated tenant infrastructure options, and configurable data retention. Contact the team directly for current attestation status and a compliance architecture review.

+How does multi-region deployment improve AI inference reliability?

Active-active deployment across availability zones ensures a single zone failure triggers automatic failover without manual intervention or downtime. For globally distributed enterprises, multi-region also addresses GDPR-style data residency requirements.

+What is zero-downtime AI model updating?

Blue-green deployment: run the new model version alongside the current version, validate behavior with shadow production traffic, then shift real traffic incrementally with rollback capability throughout. No maintenance window required.